This post is part of the Complete Guide to WooCommerce SEO.

Also in this series:- How to Link WooCommerce to Google Search Console

- How to Update robots.txt for WooCommerce

- How to Create Page Redirects in WooCommerce

- 5 Tips to Enhance your WooCommerce Category Pages Using SEO

- 8 Ways to Optimise WooCommerce Images for SEO

- How to Optimise WooCommerce Product Pages for SEO

- 35 WordPress Tools and Resources to Improve Page Speed

Understanding what a robots.txt file is and how to use it is essential when it comes to technical SEO for WooCommerce websites. If you’re unsure about robots.txt files then you are in the right place, we are going to break down what they are and how you update them for your WooCommerce site.

What is a robots.txt file?

Crawlers play a significant role in the realm of digital marketing. They fulfil dual purposes: aiding search engines in indexing and ranking your pages, and supplying data and technical insights to marketing platforms.

Nevertheless, there are instances where we may wish to impede the crawling of certain pages. This is especially pertinent if a page lacks intrinsic value to our overarching website. Paradoxically, such pages might diminish the site’s value, though they are vital for enhancing user experience. Alternatively, your website might boast numerous pages that would squander your crawl budget, thereby depriving deserving pages of the attention of bots.

To manage this, robot.txt files come to our aid, offering the capability to prevent bots from needlessly crawling designated URLs.

In the WordPress domain, WooCommerce emerges as a plugin. By default, WordPress bestows upon you a robot.txt file. You can verify the efficacy of your robots.txt file using two approaches. The initial method involves Google Search Console’s robots.txt tester. Alternatively, one can simply append “/robots.txt” to the terminus of your domain’s URL.

Why is Updating Robots.txt. Important for WooCommerce Websites?

Overlooked yet pivotal, the “robots.txt” file is an integral facet of website optimization. This seemingly modest text document holds considerable sway, governing the interactions between search engines and your WooCommerce site. Its consistent upkeep stands as a paramount measure to augment visibility, indexing, and overall operational excellence.

The Why:

Streamlined Crawling: By regularly refining the robots.txt, the process of search engine bot crawling becomes more efficient. This strategic focus on essential pages diminishes server load, thereby enhancing the user experience.

Mitigating Duplication: E-commerce platforms frequently spawn multiple versions of product pages, owing to filters and sorting mechanisms. A current robots.txt file forestalls the indexing of duplicates, thus safeguarding your SEO endeavours.

Enhanced Privacy: Safeguard customer data by impeding search engines from indexing sensitive domains, thereby fortifying the website’s security posture.

SEO Enhancement: The meticulous calibration of your robots.txt exerts influence over indexed pages and their appearance in search results. This, in turn, elevate visibility, click-through rates, and overall SEO performance.

Dynamic Adaptation: Just as your site evolves, so should your robots.txt. Regular updates ensure that novel sections are subjected to crawling, harnessing the full potential of organic traffic.

Benefits of Updating Robots.txt for WooCommerce

Precision in SEO Enhancement: Refining your robots.txt empowers precise command over search engines’ priorities within your WooCommerce site. This strategic approach ensures that essential, pertinent content gains prominence in search results. Consequently, this contributes to heightened search engine optimization (SEO) and the influx of organic traffic.

Accelerated Indexing: A current robots.txt file expedites the traversal of search engine bots. With clear directives dictating which pages to engage and which to bypass, the indexing of vital pages gains momentum. This not only amplifies your site’s visibility but also expedites the presentation of novel products and content to potential patrons.

Resource Allocation Optimization: E-commerce platforms often boast intricate hierarchies encompassing categories, filters, and product variations. A robots.txt update empowers you to redirect search engines away from resource-intensive domains, ensuring efficient server resource allocation. The outcome is a seamless, user-friendly shopping experience.

Diminished Duplicate Content: Redundant content can dilute SEO endeavours and bewilder search engines. An up-to-date robots.txt file effectively prevents the indexing of duplicate pages arising from varied product filters or sorting alternatives. This serves to preserve your site’s ranking potential.

Safeguarding Confidentiality: During transactions, WooCommerce websites handle sensitive customer data. A robots.txt update guarantees that search engines abstain from indexing private or sensitive segments of your site. This fortifies customer information protection and enhances website security.

Dynamic Adaptation: As your WooCommerce emporium evolves, your robots.txt should follow suit. Regular updates enable seamless incorporation of novel products, categories, and site attributes. The end result is accurate indexing and display of your latest offerings by search engines.

How to Access your robots.txt File for WooCommerce

Let’s now delve into the process of updating your robots.txt file for your WooCommerce website. Before we proceed, it’s important to ensure that you have integrated a plugin called Yoast onto your site. This plugin holds an esteemed reputation within the realm of WordPress-based sites. Its purpose is to enhance your website’s SEO and overall readability. It’s worth noting that while Yoast offers paid packages, the free version of the plugin is equally effective.

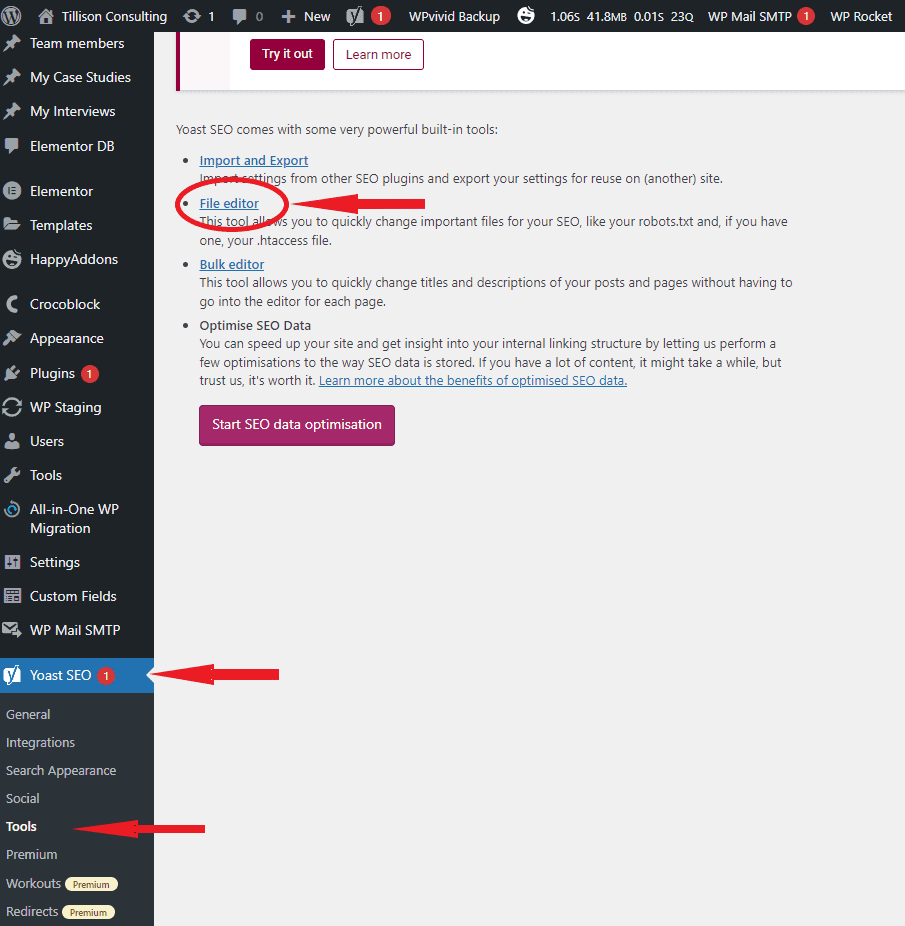

After successfully installing Yoast SEO, the next step involves accessing the backend dashboard of your WooCommerce site. To accomplish this, navigate to the Yoast section located in the left-hand menu. Subsequently, within the Yoast Menu, find and select the “tools” section. This can be observed in the illustration below. Once you’re within the “tools” segment of Yoast, proceed to click on the file editor.

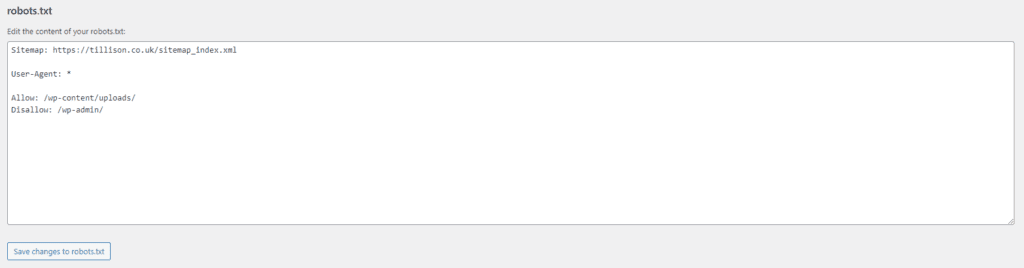

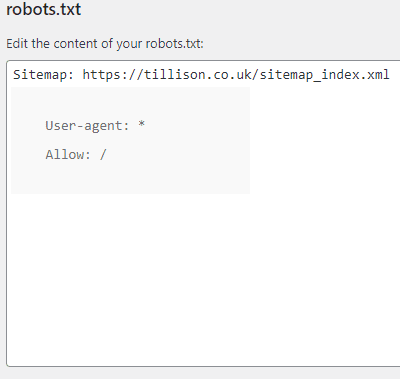

At this juncture, you will encounter the robot.txt file editor. Here, you possess the capability to modify and regulate the crawling permissions granted to bots. The question now arises: what precisely is your intended focus for exclusion? Are you targeting a solitary page, a particular file, or the comprehensive expanse of your entire website? Subsequently, our focus will shift towards elucidating the process of editing the robot.txt file to cater to distinct objectives.

How to Edit your robots.txt File for WooCommerce

Now that we’ve covered the procedure for accessing your robots.txt file, let’s delve into a concise array of inputs that might prove essential for your WooCommerce website.

Blocking a singular file or folder

You might have the intention to prevent a bot from accessing a specific file or directory, either to conserve your crawl budget or due to their perceived lack of significance in contrast to other components of your site.

For instance, if your aim is to hinder bot access to your wp-admin folder, you can employ the command illustrated on the right.

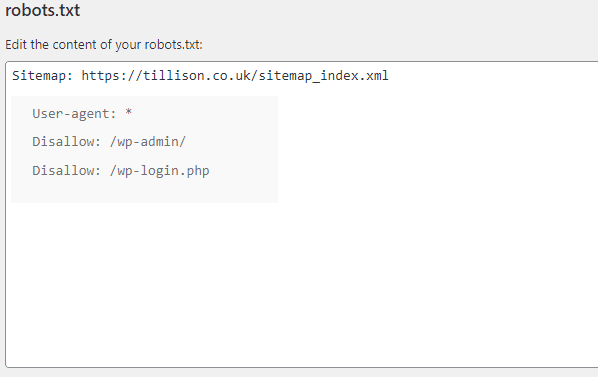

Blocking a Specific bot from Crawling your Site.

We wouldn’t recommend this approach, as it could potentially result in missed opportunities for organic traffic.

However, suppose you had a particular reason to block a specific search engine bot, such as Bing’s search engine bot. In that case, you can easily achieve this by updating your robot.txt file, as demonstrated in the image located on the left-hand side.

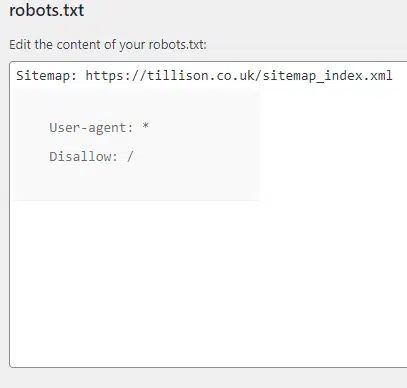

Blocking a bot from Crawling your Entire WooCommerce Site

In the event that your WooCommerce website is operational, it’s improbable that you’d find a reason or necessity to employ this input. However, if your WooCommerce site is currently undergoing development and you prefer to prevent bot crawling entirely, this particular input can be employed.

Allowing all bots to Crawl your WooCommerce Site

Lastly, we will demonstrate the process of granting unrestricted access to all bots for crawling your site. In the event that you’ve either made an error or intentionally barred a bot for a particular purpose, and you are now prepared to enable them to crawl freely, you can effortlessly execute this action by inputting the command depicted on the left.

How to Test your robots.txt Changes

The modifications to your robots.txt file take effect promptly, although they may not be immediately detected by search engine crawlers. A highly valuable and cost-free resource in this context is Google’s robots.txt Tester. This tool enables you to determine whether your robots.txt file obstructs Google’s web crawlers from accessing specific URLs on your website.

It’s important to note that the utilization of Google Search Console is a prerequisite for this process. In case you haven’t completed this setup, we have a comprehensive blog that offers guidance on how to establish a linkage between your WooCommerce site and Google Search Console. Here at Tillison, we extend an array of services, including SEO for eCommerce establishments, in addition to an array of other digital marketing offerings.

Common Mistakes to Avoid When Updating Robots.txt for WooCommerce

Although updating your “robots.txt” file for your WooCommerce website offers manifold advantages, proceeding with caution is imperative. Missteps during this process can inadvertently compromise your site’s visibility and user experience. Let’s delve into common errors to avoid when modifying your robots.txt file:

Blocking Vital Pages: Among the gravest blunders is unintentionally obstructing search engines from accessing pivotal pages. While the intent is to optimize crawling, excessive disallow rules may inadvertently curtail access to essential product pages, potentially diminishing visibility and impacting sales.

Syntax Errors: The efficacy of robots.txt files hinges on the precise syntax for instructions. Minor syntax slip-ups can render the entire file ineffective, causing unintended crawling behaviour. Rigorously verify syntax accuracy to avert any complications.

Halt on CSS and JavaScript Files: Modern search engines rely on CSS and JavaScript to comprehend your site’s framework and content. Barring these files within your robots.txt can lead to erroneous rendering, adversely affecting user experience and potentially undermining SEO rankings.

Bar on Images and Media: Preventing search engine access to images and media files can influence how your products appear in search outcomes and potentially hinder image searches. Ensure that your robots.txt file permits the crawling of media assets.

Caution with Wildcards: While wildcards (*) hold utility, their injudicious application can yield unintended outcomes. Reckless wildcard use may inadvertently restrict access to significant portions of your site.

Mindful Disallow Patterns: Exercise due prudence with disallow patterns. An overarching rule obstructing a folder might inadvertently bar entry to subfolders harbouring valuable content.

Vigilance in Monitoring Changes: Your WooCommerce website evolves progressively. Failing to routinely assess and update your robots.txt file in response to fresh pages, products, or categories can result in missed indexing opportunities.

Thorough Testing: Prior to implementation, comprehensive testing of your revised robots.txt file is pivotal. Leverage tools offered by search engines to scrutinize crawling behaviour, confirming that your modifications yield the intended outcome.

User-agent Precision: Distinct search engine bots may entail unique requisites. Inaccurate user-agent specifications can lead to irregular crawling behaviour.

Acknowledging HTTPS/HTTP Variants: Ensure seamless access to your robots.txt file on both HTTP and HTTPS iterations of your website. Neglecting this detail can lead to crawling inconsistencies for either version.