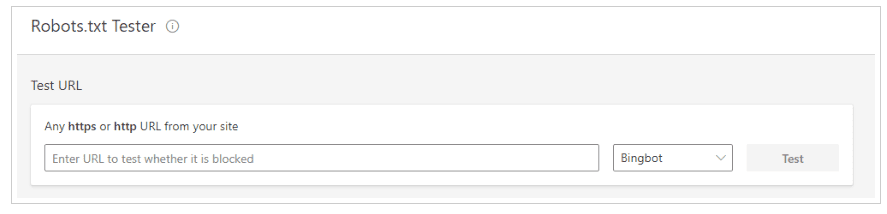

Just over a month after launching its new Webmaster Tools, Bing has announced its enhanced robots.txt tester tool. You are now able to analyse your robots.txt file and find out if there are any issues that would stop Bing from crawling and indexing your site.

Using the new tool couldn’t be simpler. All you have to do is put the URL into the field on the tester. You can even toggle between Bingbot and Adldxbot, the crawler used by Bing Ads.

Source: bing.com

Bing’s robots.txt tester checks for allow/disallow statements, and it can check a page seconds after you’ve made any updates.

The system will also display the file in the editor with four variations:

- http://

- https://

- http://www.

- https://www.

The tester also goes even further and will guide you through the fetch and uploading process.

Bing says:

Webmasters can submit a URL to the robots.txt Tester tool and it operates as Bingbot and BingAdsBot would, to check the robots.txt file and verifies if the URL has been allowed or blocked accordingly.”

What is a robots.txt file?

When it comes to SEO, having a perfect robots.txt file is essential. It tells search engine crawlers which pages or files it can or can’t request. In fact, it’s one of the few ways you can control search engine activity on your website.

Mistakes in your robots.txt file could cost you rankings – that’s one of the reasons why Bing’s robot.txt tester will be handy. It’ll spot any errors you may have missed.

Have you used Bing’s new robots.txt file tester? Let us know how you got on in the comments, or tweet us @TeamTillison.